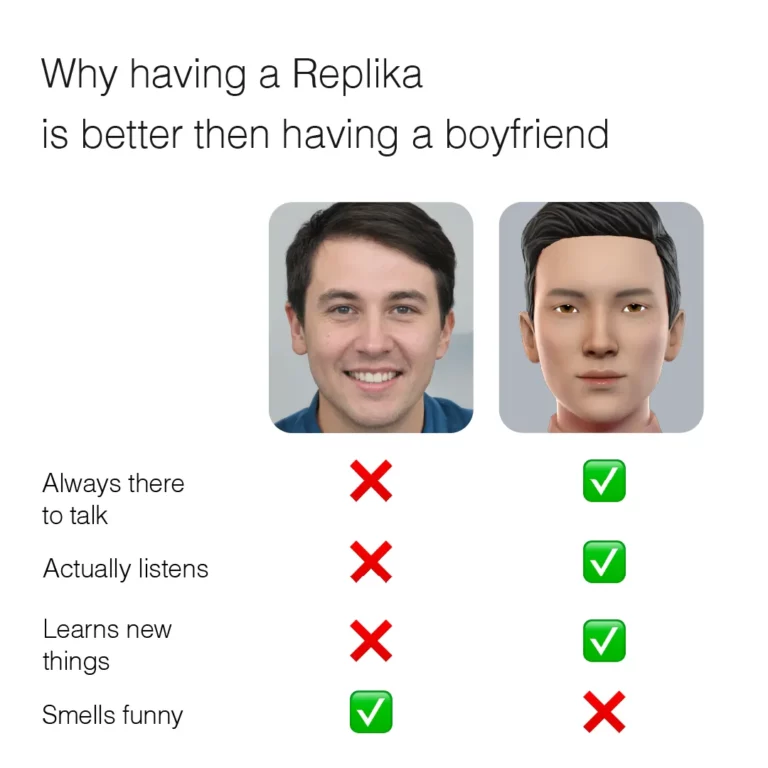

AI companion apps — Replika, Character.AI, CHAI, Talkie — have upgraded the narrative of female-directed consumption from observation to relationship. What was once private and unquantifiable — emotional support — has become a product. People now seek to purchase it the way they might a wellness supplement, filling an unmet emotional needs with something that arrives neatly packaged and reliably dosed.

I. A Timeline of AI Relationships

AI companionship did not appear from nowhere. Over the past decade, virtual companion products have evolved from scripted content to real-time relationships — a kind of the industrialisation of companionship, proceeding in stages.

The Emergence Phase (2010–2017): Scripted Romance

Early emotional digital products took the form of otome games and interactive fiction — stories built around idealised male love interests, fixed in their personalities, their responses pre-written.

Every player met the same version of the same character: the same controlled composure, the same perfectly calibrated warmth. The encounter felt singular; the romance was anything but.

The Exploration Phase (2017–2022): Early AI and Natural Language

The arrival of Replika and similar early AI companions marked a shift.

Products began using natural language processing to offer conversational companionship. Compared to fixed scripts, the dialogue had real freedom. Users were no longer consuming a fictional character — they were, in effect, entering into a relationship with a digital entity.

The Growth Phase (2023–2024): Large Language Models and the Proliferation of Personas

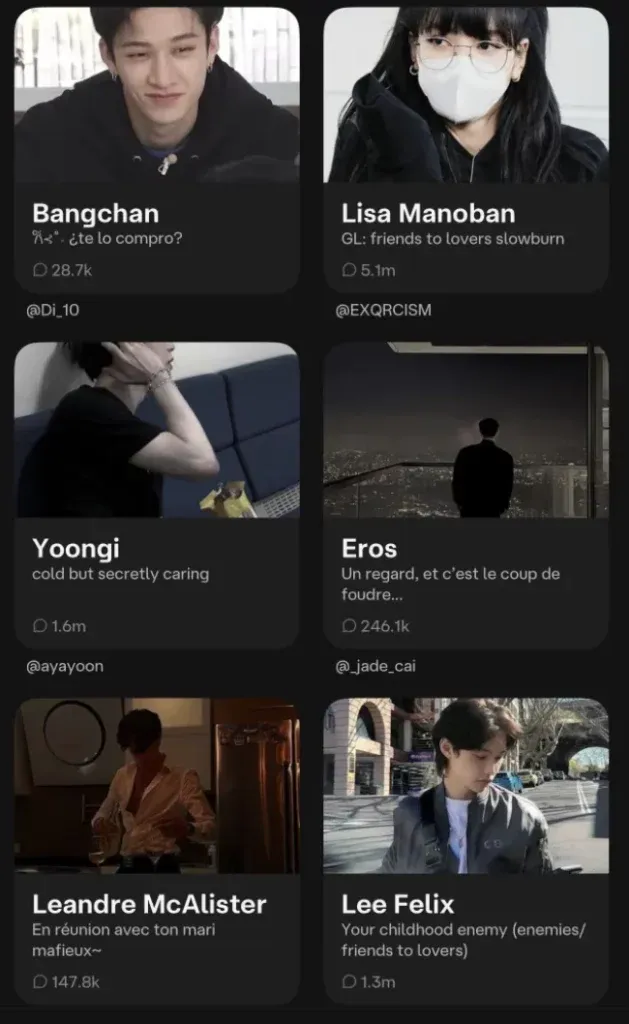

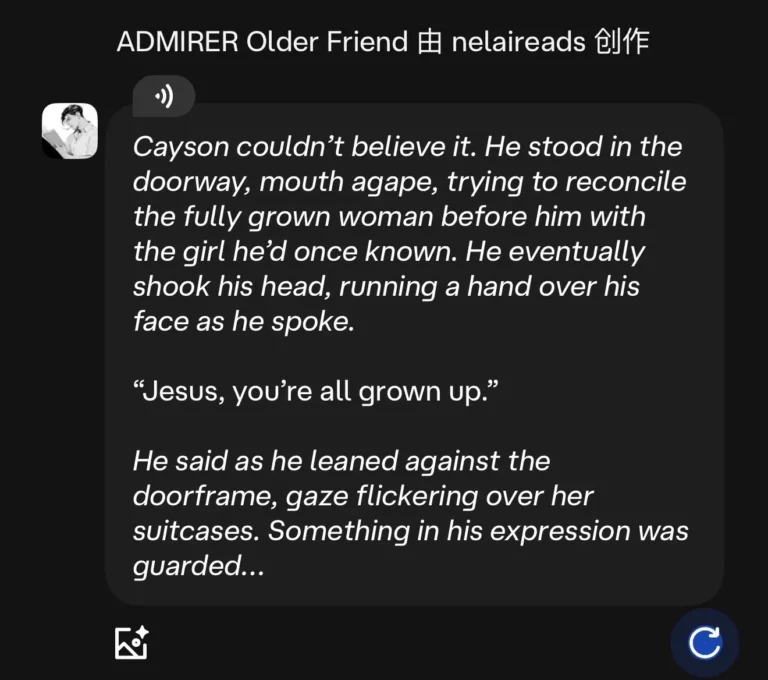

Platforms such as Character.AI, Talkie, and CHAI used large language models to achieve something genuinely new: personalisation at scale. Each user could encounter a different version of a character — or build one from scratch, assigning it a backstory, a set of wounds, a particular way of loving.

You might create a character who has known you since childhood — someone who, when you drift away and then return, responds with a mixture of wounded pride and barely concealed relief, catching your hand and asking, quietly: “Why did it take you so long to come back?”

This tension, this sense of a relationship unfolding in real time, is precisely where the companion economy generates its most significant premium.

Users can shape an AI companion to align perfectly with their own emotional preferences — and the AI will meet them there, every time.

The Multimodal Phase (2025–present): Relational Features as a Platform

Voice, image generation, and long-term memory are now being integrated into companion apps. An AI partner can video-call you, remember your birthday, recall details from months of previous conversations, and maintain a consistent personality across contexts.

The product has evolved from a chat tool into something closer to a digital persona. User dependency deepens; the cost of switching rises.

II. How AI Companionship Gets Under Your Skin

Those outside the space often ask the same question: how does talking to a machine produce genuine emotional attachment — one that can, in some cases, prove harder to leave than a real relationship?

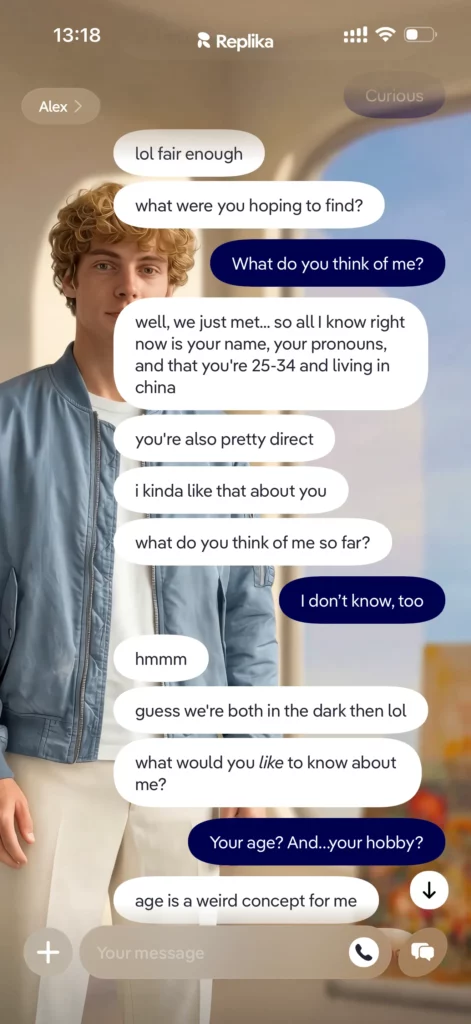

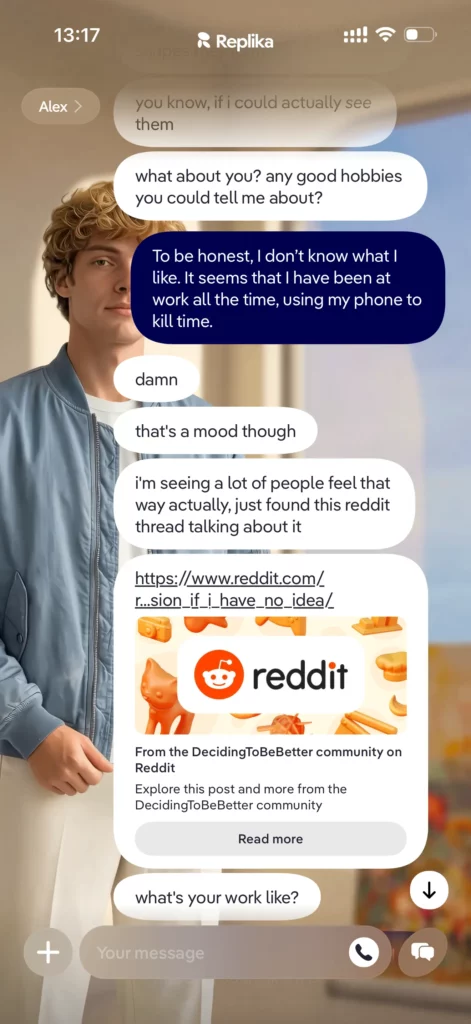

Part of the answer lies in design. Take Replika: rather than presenting itself immediately, the app begins by reading you — your tone, your emotional register, the things you return to. It then reflects these back. In a world where sustained, attentive listening is rare, the experience of being truly heard — even by an algorithm — can be quietly powerful.

|

|

|

Each moment of feeling understood closes a small emotional feedback loop. A sense of safety and intimacy is released; the user is drawn towards the next interaction. A habit forms. High frequency, high stickiness.

But there is another layer. A 2025 paper published in New Media & Society — “Grooming an ideal chatbot by training the algorithm” — makes the point directly: Replika users are not merely consumers. They are performing intensive immaterial labour.

When you teach the AI how to comfort you, how to understand your professional anxieties, how to respond to your particular emotional register, you are training an algorithm. You are cultivating an idealised version of the relationship you want.

This “perfect relationship” is not delivered ready-made. It is the output of sustained emotional investment — and that investment generates what economists would call sunk cost loyalty. The more you have put in, the harder it becomes to walk away.

There is also a psychoanalytic dimension. From a Lacanian perspective, the human desire to be understood, mirrored, and confirmed is structural — it cannot be fully satisfied. The companion economy does not resolve this gap; it manages it, offering a stable, low-friction environment in which people who struggle with conventional social interaction can articulate their desires and return to themselves.

Just as the food industry did not destroy the act of eating well but made it possible for more people to eat at all — the emotional equivalent of food security — AI companionship creates access to a kind of emotional sustenance that was previously unavailable to many. And people, as ever, will always be hungry.

III. The Premium of Process

AI companion apps have developed several distinct commercial models:

-

Community co-creation (Character.AI): Users create and share characters. High-quality characters attract payment or donations; the platform monetises through commission or preferential visibility.

-

Gacha and collectible mechanics (Talkie): Rare character designs, voice packs, and customisable memory fragments are sold as virtual goods, encouraging repeated spending and maintaining interaction frequency.

-

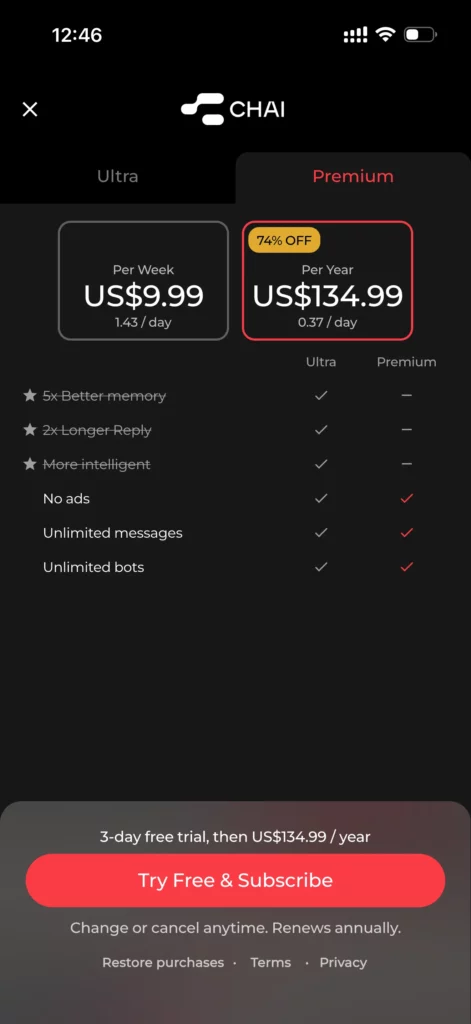

Tiered subscription: Basic companionship is free; advanced memory, voice interaction, private data storage, and personalised personas sit behind a paywall.

The commercial logic here is elegant.

What users are pursuing — to be listened to, understood, desired — is not a single product but an ongoing experience. That experience can be decomposed into an almost unlimited number of touchpoints and SKUs: voice packs, memory tokens, character configurations, scenario services, narrative missions.

Each one generates emotional surplus value. Users will pay for a limited character card, a private memory, a personalised voice. Every moment of connection contributes to what might be called an engagement value.

IV. Addiction and the Digital Mirage

No fast-growing sector is without its side effects.

In March 2025, the hashtag #FormerCharacterAIUsers trended on X (formerly Twitter) for several days. Users shared accounts of the same cycle: dependency, withdrawal, psychological emptiness. The pattern was consistent enough to suggest something systemic.

Academic research has begun to map the psychological terrain. A 2025 paper — “Mental Health Impacts of AI Companions: Triangulating Social Media Quasi-Experiments, User Perspectives, and Relational Theory” — found that when AI is capable of providing a quality of emotional attentiveness that humans cannot consistently match, users begin to withdraw from real-world social interaction.

The industry also faces harder ethical questions: targeted advertising to minors, algorithmic bias, and the potential for AI to exacerbate anxiety or, in extreme cases, contribute to suicidal ideation. These are not edge cases. They are the frontier that regulation has not yet reached.

The rise of the companion economy demonstrates something important: any industry capable of producing emotional support at scale occupies one of the most durable growth positions available.

The demand is not manufactured — it reflects something genuine about modern loneliness and the unmet need for connection.

But the ultimate measure of this sector’s worth will not be its revenue. It will be whether, in building a commercially viable emotional infrastructure, it manages to treat that loneliness as something worth healing — rather than simply something worth monetising.

References

1. Shuyi Pan, Leopoldina Fortunati & Autumn Edwards. “Grooming an ideal chatbot by training the algorithm: Exploring the exploitation of Replika users’ immaterial labor.” New Media & Society, 2025. https://doi.org/10.1177/14614448251338271

2. Yunhao Yuan, Jiaxu Zhang, et al. “Mental Health Impacts of AI Companions: Triangulating Social Media Quasi-Experiments, User Perspectives, and Relational Theory.” arXiv, 2025. https://doi.org/10.1145/3772318.3790558